- May 14, 2013

- John Belden

- Reading Time: 4 minutes

When you think you have it all figured out and everything under control -think again. Your evaluation of the situation has likely been developed without proper consideration of the biases that each of us invokes in decision making and evaluation situations. In Part I of our series on Risk Management, “Don’t Become an ERP Horror Story”, we identified how failing to recognize and address decision and assessment biases can repeatedly derail proper ERP risk management. In this blog, we explore the concept of cognitive biases and what steps to take to reduce their influence.

When you think you have it all figured out and everything under control -think again. Your evaluation of the situation has likely been developed without proper consideration of the biases that each of us invokes in decision making and evaluation situations. In Part I of our series on Risk Management, “Don’t Become an ERP Horror Story”, we identified how failing to recognize and address decision and assessment biases can repeatedly derail proper ERP risk management. In this blog, we explore the concept of cognitive biases and what steps to take to reduce their influence.

Project risk management is about making decisions regarding uncertain events that might impact the business or the project in a negative or positive way. It involves estimating the probability that these events will occur as well as the potential magnitude of their impact. These assessments and decisions on mitigation can be erroneous for any number of reasons. In my experience cognitive biases are often times at the foundation of these assessment errors. Cognitive biases are psychological tendencies that cause the human brain to draw incorrect conclusions. The concept of cognitive biases as it relates to decision making was introduced in literature in 1972. Since that time, more than 100 specific biases have been identified. These tendencies can be a product of experience, social or cultural environment, or motivational.

Consider the opportunities for biases to impact the risk management processes in the face of the different motivations and experiences of the Systems Integrators; Your Project Team, Line Managers and Senior Executives. This leads me to the fifth imperative of ERP Implementation Risk Management (For imperatives 1-4, check out Part II: The Silver Bullet and Part III: Executive Engagement):

Imperative 5: Leaders need to recognize that biases exist in assessing risks. Before you can solve a problem, you need to recognize that you have one to begin with. One thing we can categorically state is that risk assessment is influenced by the biases of the individuals participating.

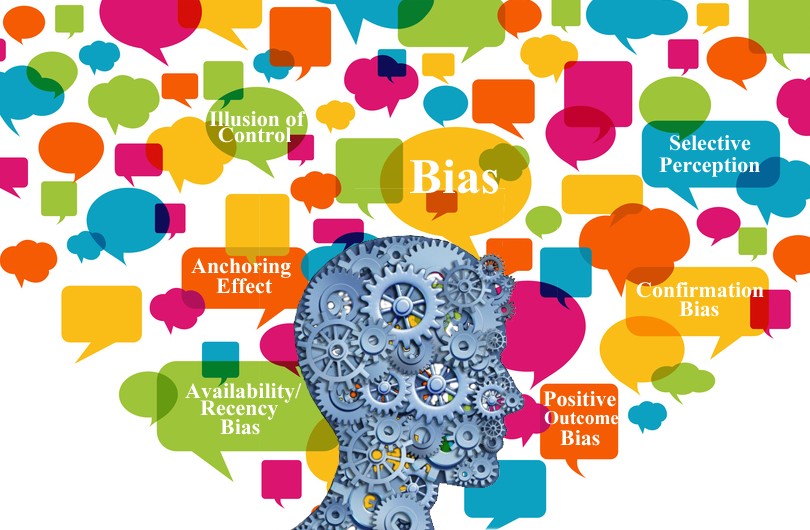

To develop a better understanding of how these biases affect the risk management process, we have outlined a few of the biases most frequently observed.

Anchoring Effect – This is a tendency to rely too heavily or anchor on a past reference when making assessments. A general tendency of the human condition is to believe that the right answer is close to an answer provided by an expert.

Confirmation Bias– A tendency to search for information or opinions that are closely aligned with your own to validate your thinking. Often times, members of the same organization or project team will fall into the confirmation trap as they have a common set of program / project experiences.

Selective Perception – Guarding your own interests or constituents. In the space of ERP implementations, this bias tends to manifest itself in assessing go-live readiness. There is a clear tendency of system integrators to minimize the probability of business disruptions when the reward for such thinking is an increase in the probability of attaining the on-time or under-budget delivery bonus.

Availability / Recency Biases – We will categorize these two together. These biases are a tendency to utilize information that is available in memory. This tends to be events that were unusual, vivid, or the most recent occurrence of an event. Take the example of assessing the probability of a server failure when the assessor recently experienced such a failure on a prior project. In this case, there is a good chance that the assessment of the probability and impact of such an event will be biased.

Optimism or Positive Outcome Biases – Program managers by their nature often carry this trait. It is extremely difficult to do this job unless you believe a lot of things are going to go right. This human tendency also manifests itself when there is a negative consequence to delivering bad news.

Hubris or Illusion of Control – This bias manifests itself in the belief that program managers have the ability to control events that they clearly cannot. This often occurs when considering an individual’s ability to sway an executive’s opinion of a situation.

Given the significance of cognitive biases and the influence they have on the risk management process, it is important to understand a few of the techniques that can be ued to minimize the effect of these biases.

1. Work with Multiple Anchors – obtain multiple independent opinions of probable risks and potential impacts. Having in hand 2-3 assessments that are not influenced by each other will help in properly positioning risk on your matrix. If the assessments are significantly different, it may properly cause you to dig deeper into the situation.

2. Probe the Expertise of the Experts – when an expert is providing an assessment of a situation you expect them to draw on their past experience and education. Program managers need to dig deep into the assessor’s credentials to confirm relevant industry, geographic, domain, and project team experience. It is critical to make sure your experts, are in fact, experts.

3. Make decisions based upon empirical data vs. intuition – whenever possible, sequence your assessment process such that you are gathering data first and then assessing risk next. Asking for an opinion and then obtaining the supporting data is a leading cause of the confirmation bias.

4. Tapping Into Un-Biased Experts – engage experts from outside the core project team who have a limited stake in the outcome. The best advice is often times from those who have the least to gain or lose.

By merging these four techniques, we form the sixth imperative of ERP Implementation Risk Management:

Imperative 6: Engage independent agents in assessing risks. Independent experts can provide separate assessments for anchors, probe the expertise of the system integrator, offer empirical data, and most importantly, maintain a limited stake in the outcome.

A good program manager is not one who believes they fully understand all aspects of a program, but rather one who encourages good questions so that big decisions and significant risks are fully vetted. Our next post in this series will dig into the inter-relationships of risks and the compounding that can occur.

We welcome your thoughts, feedback and the opportunity to engage in collaborative discussions. Please do not hesitate to contact David Blake with any feedback or inquiries or leave us a comment. We look forward to hearing from you.

Related Blogs

Strategies for Holding Your ERP System Integrators Accountable

Why ERP Projects Fail: Finding the Gaps in Your Program Plans

4 Techniques for Improving “Agile ERP” Integrator Accountability

About the Author